Research continues into the rapid development of AI-powered facial-recognition technology, with applications ranging from CCTV security cameras and Apple’s iOS Facetime features that have already been in the hands of consumers and private organizations for years. Ethical questions surrounding the uses for facial recognition software are still highly prevalent, and a new survey shows how many AI researchers view the potential applications for their studies with ethical skepticism.

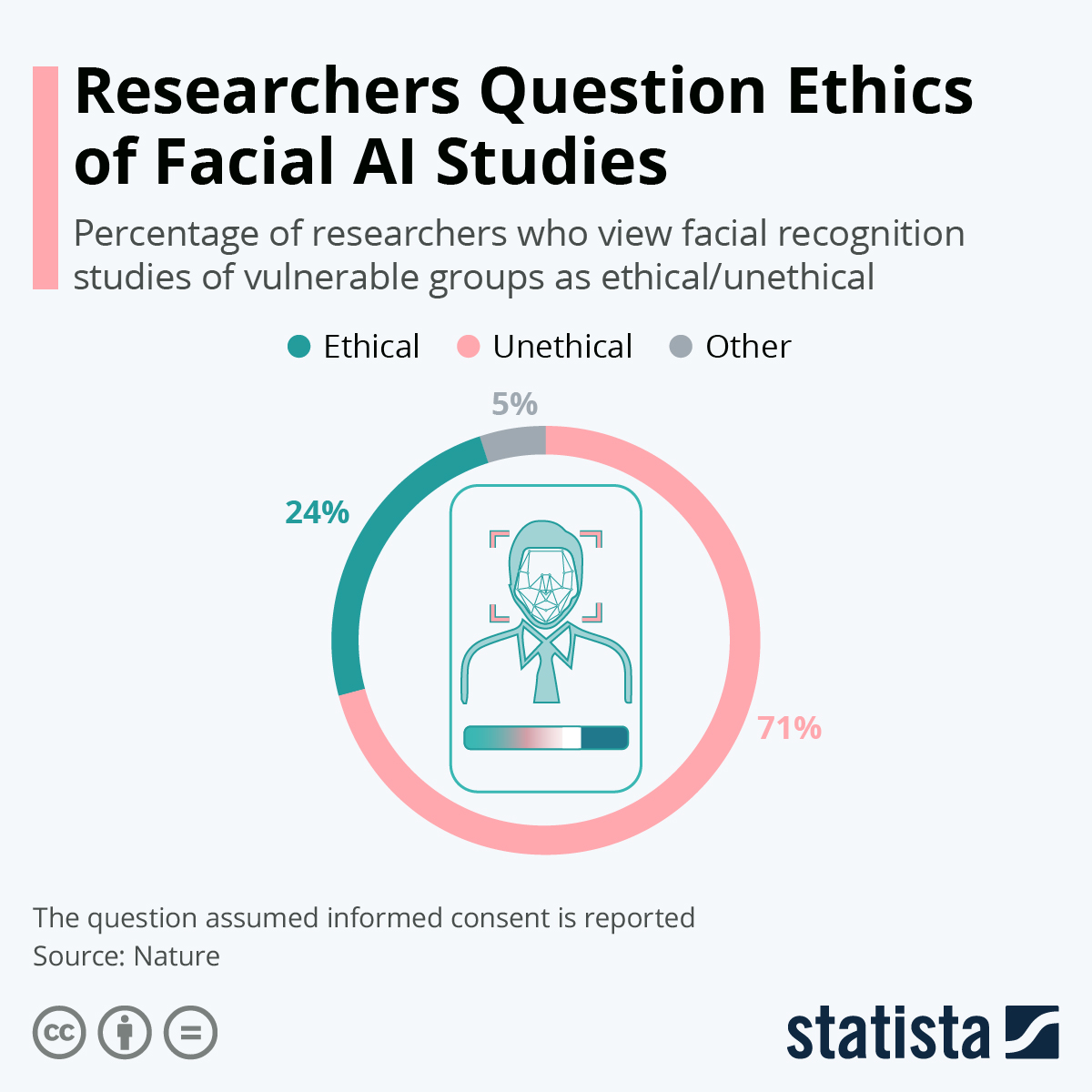

A new survey published in Nature shows a vast majority of researchers across the world view the use of facial-recognition technology on vulnerable groups and populations as unethical, even when informed consent is given. Seventy-one percent of the 480 researchers worldwide said it was unethical, while 26 percent said it was either ethical or could be ethical while acknowledging some current uses are unethical.

Facial-analysis studies cover a broad range of topics, with some aimed at determining human facial and body characteristics and others focused at accurately predicting aspects of identity. Consumer use, like in the iPhone, offer extra layers of security and access, while many other uses regard applications for law enforcement and widespread database tracking. Much of the progress in facial recognition technology has come from social media, where millions of pictures are continuously analyzed and used to train software in pattern recognition and deep learning.

The survey gave the example of the Muslim Uyghur population in Xinjiang, China, as an unethical use for facial-recognition studies. The question pertains to whether the vulnerable population of Uyghurs – many detained in camps – can freely give proper informed consent for such studies, leading to a high degree of ethical questionability among scientists. Concerns have arisen lately with tech companies in China utilizing facial recognition to track vulnerable groups like Uyghurs, with informed consent laws being fairly broad and vague in the country.